Why My Mac Mini M4 Outperforms Dual RTX 3090s for LLM Inference

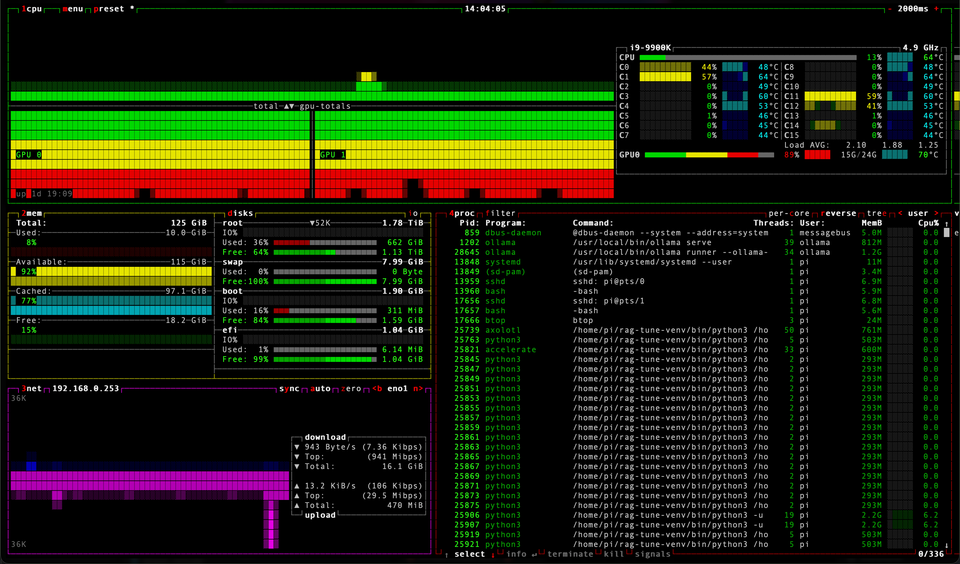

I built a dual RTX 3090 server for local LLM inference. A Mac Mini M4 turned out to be 27% faster and 22× more efficient. Here's why memory bandwidth beats raw GPU power.

I built a dual RTX 3090 server for local LLM inference. A Mac Mini M4 turned out to be 27% faster and 22× more efficient. Here's why memory bandwidth beats raw GPU power.

Fine-tuning open-source AI models for deep domain expertise in enterprise networking

SamanthAI my artificial intelligence learning lab, all about LLMs and personnal assistant

In this blog post, we'll dive into setting up a powerful AI development environment using Docker Compose. The setup includes running the Ollama language model server and its corresponding web interface, Open-WebUI, both containerized for ease of use.

Language processing has come a long way, thanks to the rise of large language models (LLMs). However, leveraging these advanced technologies often requires significant computational resources or reliance on cloud services.

In this blog, we'll demonstrate how automation can make a complex tool like Oobaboga accessible to a wider audience by providing an auto-install script in this post. Let's do this

Let's build an AI character that will act like Samantha in the movie HER...

Exploring further the TTS options with an AI, the result is mesmerising

Text to speech with an AI, what can go wrong ?

AI, LLM, Models... very interesting topic, I'm exploring and the more I learn the more I want to go further... Am I AI ? 100% blood and flesh here :)