Setting Up Qwen3-TTS with GPU Acceleration on Ubuntu Server

I spent a few sleepless nights chasing different TTS stacks - Qwen3-TTS, Fish Speech, a handful of other libraries - only to finally get a stable, GPU-accelerated text-to-speech API humming on my AI01 server. Below is the exact recipe that worked.

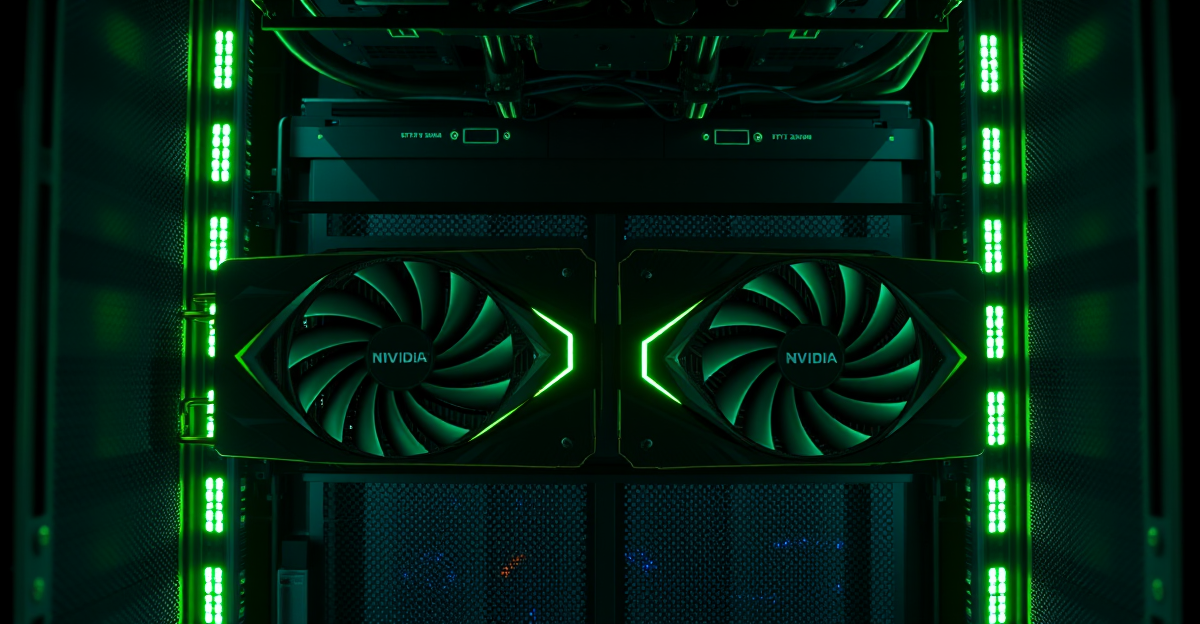

Hardware Setup

- Server: AI01 (192.168.0.253)

- GPU: 2x NVIDIA RTX 3090 (24GB VRAM each)

- OS: Ubuntu Server with NVIDIA Driver 535.288.01

- Allocation: GPU 0 for ComfyUI, GPU 1 for Qwen3-TTS

Prerequisites

Before you dive in, make sure you have the following:

- NVIDIA drivers installed and working (

nvidia-smishould list your GPUs) - A CUDA 12.x compatible system

- Python 3.10 or newer, plus pip

Installation Script

Create the installation script at /home/pi/qwen3tts.sh:

#!/usr/bin/env bash

set -euo pipefail

### === Config ===

INSTALL_DIR="${INSTALL_DIR:-/opt/qwen3-tts}"

VENV_DIR="${VENV_DIR:-$INSTALL_DIR/venv}"

PYTHON_BIN="${PYTHON_BIN:-python3}"

PORT="${PORT:-8000}"

### === System deps ===

sudo apt-get update -y

sudo apt-get install -y python3 python3-venv python3-pip ffmpeg git

### === Create install dir ===

sudo mkdir -p "$INSTALL_DIR"

sudo chown -R "$(id -u):$(id -g)" "$INSTALL_DIR"

### === Create venv ===

"$PYTHON_BIN" -m venv "$VENV_DIR"

source "$VENV_DIR/bin/activate"

pip install --upgrade pip wheel setuptools

### === Install Python deps ===

pip install --upgrade \

huggingface_hub \

fastapi uvicorn \

transformers accelerate \

soundfile numpy

### === Install Torch with CUDA support ===

pip install --upgrade torch --index-url https://download.pytorch.org/whl/cu121

### === Install Qwen3-TTS ===

pip install qwen-tts

echo "Installation complete!"

echo "Activate venv: source $VENV_DIR/bin/activate"Run the script:

chmod +x /home/pi/qwen3tts.sh

./home/pi/qwen3tts.shAPI Server

Create the FastAPI server at /opt/qwen3-tts/server.py:

import os

import torch

import uuid

import soundfile as sf

from fastapi import FastAPI, HTTPException

from pydantic import BaseModel

from fastapi.responses import FileResponse

# Force GPU 1 (second GPU)

os.environ["CUDA_VISIBLE_DEVICES"] = "1"

from qwen_tts import Qwen3TTSModel

app = FastAPI(title="Qwen3-TTS API")

# Load model on startup with GPU allocation

print("Loading Qwen3-TTS model on GPU 1...")

model = Qwen3TTSModel.from_pretrained(

"Qwen/Qwen3-TTS-12Hz-1.7B-Base",

device_map="cuda:0",

torch_dtype=torch.float16

)

print(f"Model loaded on device: {model.device}")

# Language mapping for convenience

LANG_MAP = {

"fr": "french",

"en": "english",

"de": "german",

"es": "spanish",

"it": "italian",

"pt": "portuguese",

"ru": "russian",

"zh": "chinese",

"ja": "japanese",

"ko": "korean"

}

class TTSRequest(BaseModel):

text: str

voice: str = "fr"

@app.get("/health")

def health():

return {

"status": "ok",

"gpu": torch.cuda.is_available(),

"device": str(model.device)

}

@app.post("/generate")

async def generate(req: TTSRequest):

try:

output_path = f"/tmp/{uuid.uuid4()}.wav"

# Map language code to full name

lang = LANG_MAP.get(req.voice, req.voice)

# Use voice reference with x_vector_only_mode (no ref_text needed)

voice_ref = f"/home/pi/voice-refs/samantha_voice_{req.voice}.wav"

if not os.path.exists(voice_ref):

voice_ref = "/home/pi/voice-refs/samantha_voice.wav"

audio_list, sr = model.generate_voice_clone(

text=req.text,

language=lang,

ref_audio=voice_ref,

x_vector_only_mode=True

)

audio = audio_list[0]

sf.write(output_path, audio, sr)

return FileResponse(output_path, media_type="audio/wav")

except Exception as e:

raise HTTPException(status_code=500, detail=str(e))

if __name__ == "__main__":

import uvicorn

uvicorn.run(app, host="0.0.0.0", port=8000)

Voice References

Drop your voice reference files into /home/pi/voice-refs/:

mkdir -p /home/pi/voice-refs

# Add your reference audio files (5-30 seconds of clear speech)

# samantha_voice_fr.wav

# samantha_voice.wav (for English)The x_vector_only_mode=True flag extracts just the voice embedding, so you don't need to transcribe the reference audio.

Start the Server

cd /opt/qwen3-tts

./venv/bin/uvicorn server:app --host 0.0.0.0 --port 8000Or run it in the background:

nohup ./venv/bin/uvicorn server:app --host 0.0.0.0 --port 8000 > server.log 2>&1 &API Usage

Health check:

curl http://192.168.0.253:8000/healthGenerate speech (French):

curl -X POST http://192.168.0.253:8000/generate \

-H "Content-Type: application/json" \

-d '{"text": "Bonjour, ceci est un test.", "voice": "fr"}' \

--output output.wavGenerate speech (English):

curl -X POST http://192.168.0.253:8000/generate \

-H "Content-Type: application/json" \

-d '{"text": "Hello, this is a test.", "voice": "en"}' \

--output output.wavVerify GPU Usage

Run nvidia-smi while generating audio to confirm GPU utilization. GPU 1 should show ~5GB VRAM usage and active compute during inference.

Troubleshooting

Language Error

Error: Unsupported languages: ['fr']

Solution: Use full language names: french, english, german, etc. The LANG_MAP in server.py handles this automatically.

ref_text Required Error

Error: ref_text is required when x_vector_only_mode=False

Solution: Set x_vector_only_mode=True in the generate_voice_clone call.

GPU Not Used

If the model runs on CPU, verify:

CUDA_VISIBLE_DEVICES=1is set at the top of server.pydevice_map="cuda:0"is passed to from_pretrained()- PyTorch CUDA is installed:

pip install torch --index-url https://download.pytorch.org/whl/cu121

Supported Languages

The model supports: auto, chinese, english, french, german, italian, japanese, korean, portuguese, russian, spanish

Conclusion

This setup delivers a production-ready TTS API powered by dedicated GPU hardware. The model uses around 5GB of VRAM and responds in under 10 seconds for typical phrases. With voice cloning via x-vector embeddings, you can build consistent voice personas without transcribing the audio.

Update: I have since moved from Qwen3-TTS to Chatterbox Multilingual, which handles French much better out of the box and requires far less tuning. That said, this guide remains useful if you specifically want to try Qwen3-TTS or if your use case is English-only where it performs well.