What’s the Vulnerability? (CVE-2025-27152)

Axios is a widely-used HTTP client for Node.js. It allows requests with custom headers and follows redirects by default.

In Axios 0.30.0- and 1.0.0-< 1.8.2, a bug in the redirect logic causes headers,including if present on the original request,to be forwarded to the target of a 3xx redirect. An attacker can exploit this with an SSRF attack and inadvertently leak credentials that were meant for an internal endpoint.

CVE-2025-27152 (GHSA-jr5f-v2jv-69x6) has a CVSS v4 score of 7.7 (High) and v3 score of 5.3 (Medium). The fix is simply to upgrade Axios to >= 1.8.2.

Impact: If a vulnerable service receives an intentionally crafted redirect to an attacker-controlled host, the service will send its authorization headers (e.g., ) to that host. The attacker can then use those leaked tokens to access other internal resources.

I had never seen a real-world exploit of this issue before. It was a reminder that even very common dependencies can become the entry point for an attack.

Immediate Response: A Quick Scan

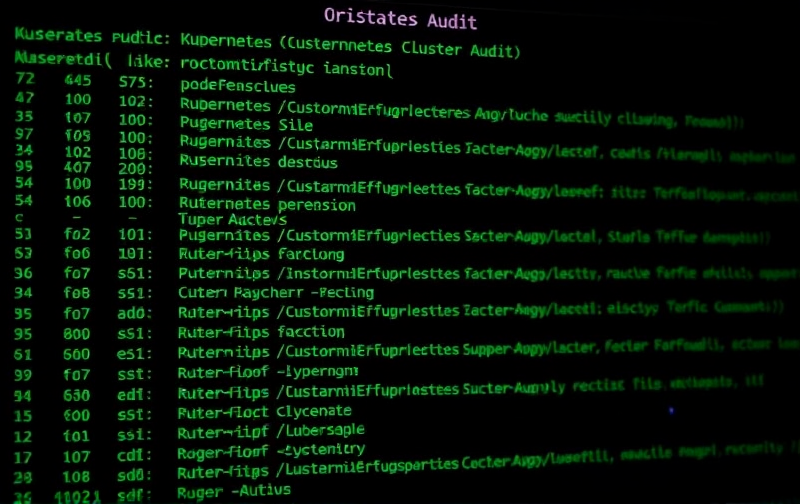

My first step was to enumerate Axios versions installed across the cluster. With Talos and its tool I ran a quick command:

Note: In many pods the directory was not mounted, so the command returned none. My custom script checks for the directory first to avoid errors.

The output highlighted the following:

| Application | Axios Version |

|---|---|

| Nightscout 15.0.6 | 0.21.4 |

| Uptime Kuma 2.2.1 | 0.30.3 |

| Outline Enterprise | 1.13.2 |

| Ghost / n8n / Twenty CRM / Karakeep / Open WebUI | no Axios |

| OpenClaw (service on SAMANTHA01) | 1.14.0 |

Only Nightscout was clearly vulnerable. Uptime Kuma’s 0.30.3 is between 0.30.0-1.0.0 and may be potentially safe (the CVE only affects < 0.30.0 or >= 1.0.0-< 1.8.2). Out of caution, I treated it as exposed until I could verify.

The Attack Scenario

In practice, an attacker could:

- Send a request to a service running our vulnerable Axios instance.

- Point the request to an external URL that issues a 302 redirect back to an internal host.

- Axios follows the redirect and forwards headers (or any custom headers) to the target.

- The external server captures those headers and then uses them to impersonate the service.

Because Axios follows redirects by default, any application that performs external HTTP calls - for example a sync script or an admin tool - could be compromised.

Hardening Strategy: NetworkPolicies

Updating all dependencies to a patched version is the first-class solution, but it isn’t always possible in a production environment. We were already in the middle of a deployment cycle, so the quickest and safest mitigation was to block unwanted outbound traffic with Kubernetes NetworkPolicies.

Why NetworkPolicy?

- Zero-trust principle: allow only the traffic that is explicitly required.

- Least-privilege: each pod gets a firewall rule.

- Declarative & versioned: part of the cluster manifests, under source-control.

I scoped the policy to the Nightscout namespace only (it’s the only one currently vulnerable). The policy allows DNS lookups, access to the MongoDB instance that Nightscout uses, and any internal k8s services. All other outbound traffic is denied.

Tip: The allows all pods in any namespace unless you narrow it. To restrict to only internal services, add and specify labels.

After applying the policy, I ran the classic sanity check:

Both commands returned timeout, confirming that outbound HTTP/HTTPS traffic was blocked. The internal MongoDB connection remained unaffected.

Mitigation Checklist

- Audit all pods for Axios (or other vulnerable deps).

- Check whether they depend on external HTTP calls.

- Classify each pod as “vulnerable”, “safe”, or “unknown/needs review”.

- For vulnerable pods, schedule a dependency upgrade.

- Apply NetworkPolicies to block external traffic until the upgrade is merged.

- Verify that internal dependencies (e.g., Mongo, Redis) remain reachable.

- Repeat the audit quarterly or whenever a new deployment occurs.

| Pod | Vulnerability | Mitigation | Final Status |

|---|---|---|---|

| Nightscout | ✅ | NetworkPolicy + upgrade scheduled | In progress |

| Uptime Kuma | ❓ | Inspect redirect handling, policy applied | In progress |

| Outline | ✅ | Already patched | ✅ |

| OpenClaw | ✅ | Already patched | ✅ |

Lessons Learned & Limitations

- Dependency chaos: Even a small microservice that only imports Axios can become an attack vector.

- NetworkPolicy double-edged: While effective, it can break legitimate traffic if mis-configured. Always test in a dev namespace first.

- Audit coverage: The container command I used assumes is present. If a service uses a different package manager (, ) or bundles dependencies differently, the script might miss it. A more solid approach is to ship a small Go or Rust binary that parses , , or .

- Patch speed: The most solid fix is to upgrade Axios. In environments with strict release cycles, you may need a roll-out strategy to avoid breaking production.

- Zero-trust vs. business needs: Strict NetworkPolicies can hinder cross-namespace communication. Align with application owners before hardening.

Next Steps

- Upgrade nightly in Nightscout’s Dockerfile to Axios 1.8.2+.

- Once verified, remove the NetworkPolicy to restore normal egress.

- Implement a CI pipeline that flags any new Docker image relying on a vulnerable Axios version.

- Extend the scan to other languages (Python , Ruby ) to catch similar redirects.

- Document the incident in our internal Post-mortem repo for future reference.

Tip: GitHub Actions can run on every commit to the directory, and abort the build if a vulnerable version is detected.

TL;DR

- CVE-2025-27152: SSRF + credential leakage via Axios redirects.

- Our cluster: Nightscout vulnerable (0.21.4), possibly Uptime Kuma (0.30.3).

- Mitigation: NetworkPolicy blocks all outbound HTTP/HTTPS from the vulnerable namespace.

- Plan: Upgrade Axios in all images, then trim NetworkPolicy.

- Takeaway: Even popular, well-maintained libraries can introduce critical vulnerabilities; a layered defense strategy (dependency tracking + network isolation) is essential.

I hope this article helps you spot and contain SSRF attacks in your own clusters. Remember: a small change in a dependency can open a big backdoor - stay vigilant, stay patched.